The core problem is that creating a digital identity is usually free and instant. When the cost of entry is zero, attackers can flood a network, manipulate reviews, swing governance votes, or even crash a peer-to-peer service. This is why Reputation Systems are so critical. They act as a filter, ensuring that influence isn't just about how many accounts you have, but how much trust you've actually earned through consistent, honest behavior over time.

The Mechanics of a Sybil Attack

To understand how to stop these attacks, we first have to look at why they work. A network becomes vulnerable based on three main factors: how cheap it is to make an account, whether the system trusts strangers without a "chain of trust," and if the system treats every identity exactly the same. In many early peer-to-peer networks, identities were just abstractions. This created a many-to-one mapping where one human could easily control dozens of network nodes.

A classic example of this vulnerability is the BitTorrent Mainline DHT. Researchers found that because it's so easy to generate identities in that system, large-scale attacks are still feasible. When an attacker can cheaply spin up thousands of nodes, they can effectively "cloak" the network or redirect traffic, proving that simply being distributed isn't the same as being secure.

Economic Friction: Making Fake Identities Expensive

The most direct way to fight Sybils is to make it hurt the attacker's wallet. We call this economic friction. If creating an identity costs something, an attacker can't possibly afford to create a million of them. This is the fundamental logic behind the most famous blockchain mechanisms:

- Proof-of-Work (PoW): Requires computational power. You can't just "claim" to be a miner; you have to prove you spent electricity and hardware cycles to solve a puzzle.

- Proof-of-Stake (PoS): Requires locking up financial value. To have a say in the network, you must stake tokens. If you try to cheat, the network can "slash" your stake, taking your money away.

Modern networks like the Arcium Network take this further. They use a two-tiered defense. First, they implement Intra-Cluster Sybil Resistance to stop nodes within a small group from colluding. Second, they use Network-Wide Sybil Resistance by randomly inserting at least one independent node into every non-permissioned cluster. This acts as a "spy' in the house" for the network, ensuring that a small group of fake accounts can't secretly take over a specific section of the system.

| Strategy | Primary Mechanism | Cost to Attacker | Trade-off |

|---|---|---|---|

| Economic Friction | Staking / Mining | High (Financial/Hardware) | Higher entry barrier for new users |

| Identity Proofs | Biometrics / ZKPs | Medium (Verification effort) | Privacy concerns / Complexity |

| Reputation Systems | Behavioral History | High (Time/Consistency) | Slow to build trust (Cold start) |

| Social Graph Analysis | Relationship Mapping | Medium (Social engineering) | Requires existing network of trust |

Web3 Identity and the Privacy Paradox

We've all seen how traditional platforms handle this. Facebook and Twitter employ armies of moderators and AI filters to ban bots in waves. But this is a centralized solution. In Web3, we want to prove we are human without handing over our passport to a corporation. This is where Zero-Knowledge Proofs (ZKP) come in.

A ZKP allows a user to prove a statement is true (e.g., "I am a unique human") without revealing the underlying data (e.g., "Here is my government ID"). By combining ZKPs with biometric checks or wallet-bound credentials, networks can verify the "truth" of a user's uniqueness without ever knowing who that user actually is. This effectively makes identity scarce again without sacrificing anonymity.

Advanced Detection: Following the Digital Breadcrumbs

Even with economic barriers, a determined attacker might still try to sneak in. This is where behavioral analysis and social graphs become the last line of defense. Real humans are messy; bots are predictable. Machine learning systems now monitor on-chain behavior, looking for patterns that scream "bot."

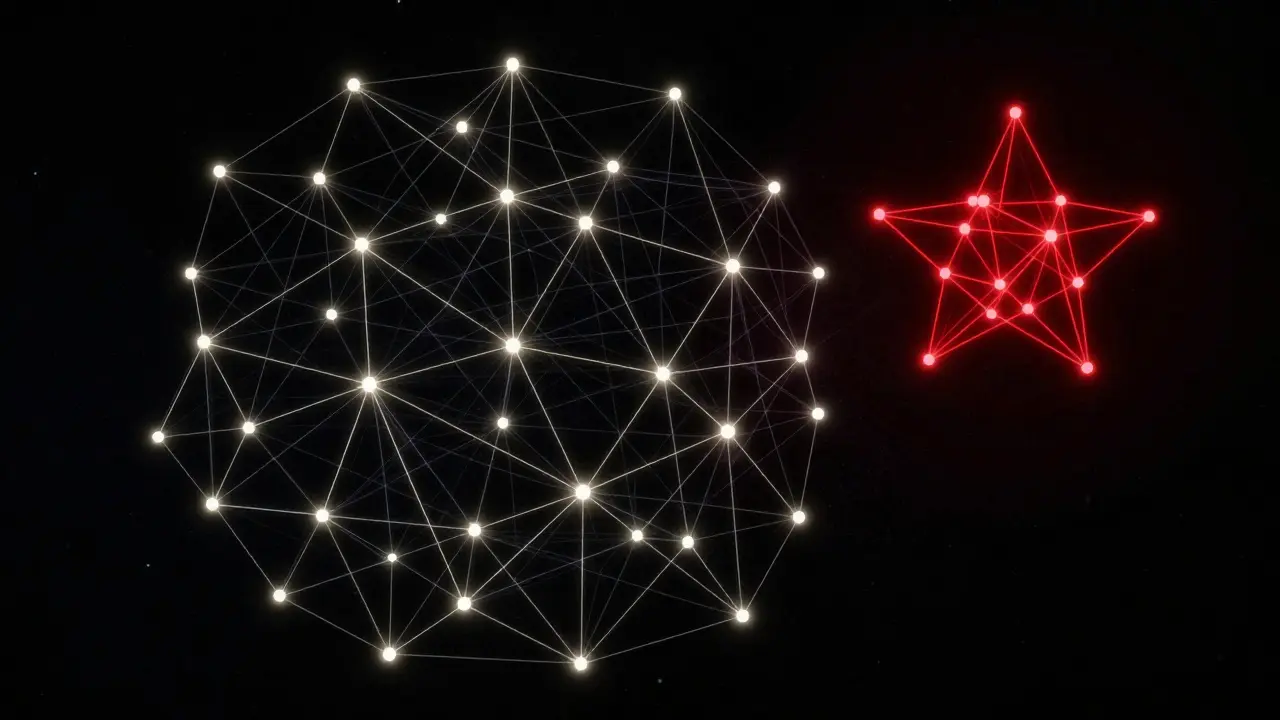

For example, if a thousand wallets all perform the exact same sequence of transactions at precisely the same interval, it's highly unlikely they are separate people. Similarly, social graph analysis examines how wallets interact. Real users have a complex web of organic connections. Fake accounts, however, often exist in "clusters"-they might all be funded by a single master wallet or only interact with each other. By identifying these isolated islands of activity, networks can flag and neutralize Sybil clusters before they can influence a vote.

Building a Sybilproof Reputation Function

From a mathematical perspective, the goal is to create a "sybilproof" reputation function. This is a formula where no matter how many fake identities an attacker creates, they cannot increase their total rank or influence in the system. One advanced approach is to compute reputation relative to a fixed, trusted node. If you trust Alice, and Alice trusts Bob, Bob has some inherited reputation. If an attacker creates ten thousand accounts that no one trusts, those accounts have a reputation of zero.

The most effective reputation systems are those earned over time. A bot can be programmed to stake tokens instantly, but it's much harder to fake five years of consistent, helpful participation in a decentralized application (dApp). By rewarding longevity and quality of interaction, the network ensures that power stays in the hands of genuine contributors.

What is the main difference between a Sybil attack and a 51% attack?

A 51% attack is about controlling the majority of a network's hashing power or stake to rewrite the ledger. A Sybil attack is about creating many fake identities to trick the system into thinking there are more participants than there actually are, usually to manipulate voting or reputation.

Can Zero-Knowledge Proofs completely stop Sybil attacks?

They are a powerful tool, but not a complete solution on their own. ZKPs help verify that a user possesses a certain credential (like a unique biometric ID) without revealing the ID itself, but the system still needs a reliable way to ensure that the original credential wasn't issued to multiple people.

Why is Proof-of-Stake considered Sybil resistant?

Because it attaches a financial cost to influence. In a PoS system, your "vote" is weighted by the number of tokens you hold. Creating 1,000 accounts doesn't help an attacker if they only have 100 tokens; whether those tokens are in one wallet or split across a thousand, the total voting power remains the same.

How does social graph analysis detect bots?

It looks at the topology of connections. Real users typically have a "small world" network structure with organic links to various groups. Sybil clusters often look like "stars" or isolated pods where many accounts are linked to one central controller but have few meaningful connections to the wider, honest community.

Does a high reputation score guarantee a user is not a Sybil?

Not necessarily, but it makes the attack much more expensive. A sophisticated attacker can "age" accounts and simulate human behavior to build reputation. This is why the best systems use a layered approach, combining reputation with economic stakes and behavioral monitoring.

Next Steps for Network Security

If you're designing a dApp or a decentralized community, don't rely on a single defense. A a combination of strategies is your best bet. Start by implementing a basic economic barrier, such as a small registration stake. Then, integrate a behavioral layer that tracks activity over time. For high-stakes governance, look into ZK-identity solutions that prove humanity without compromising privacy. The goal isn't to make the network impossible to enter, but to make it impossibly expensive to fake.